Visual intelligence

Outset's Visual Intelligence suite brings behavioral, emotional, and real-world context into AI-moderated interviews — enabling smarter probing, more context-aware insights, and faster, more confident decisions.

The only platform that perceives, interprets, and adapts in real time

First-generation AI moderation listened. Outset's Visual Intelligence goes further — watching screens, reading emotions, and observing real-world behavior to capture everything participants do, not just what they say.

Three types of intelligence

One unified research platform

Digital Intelligence

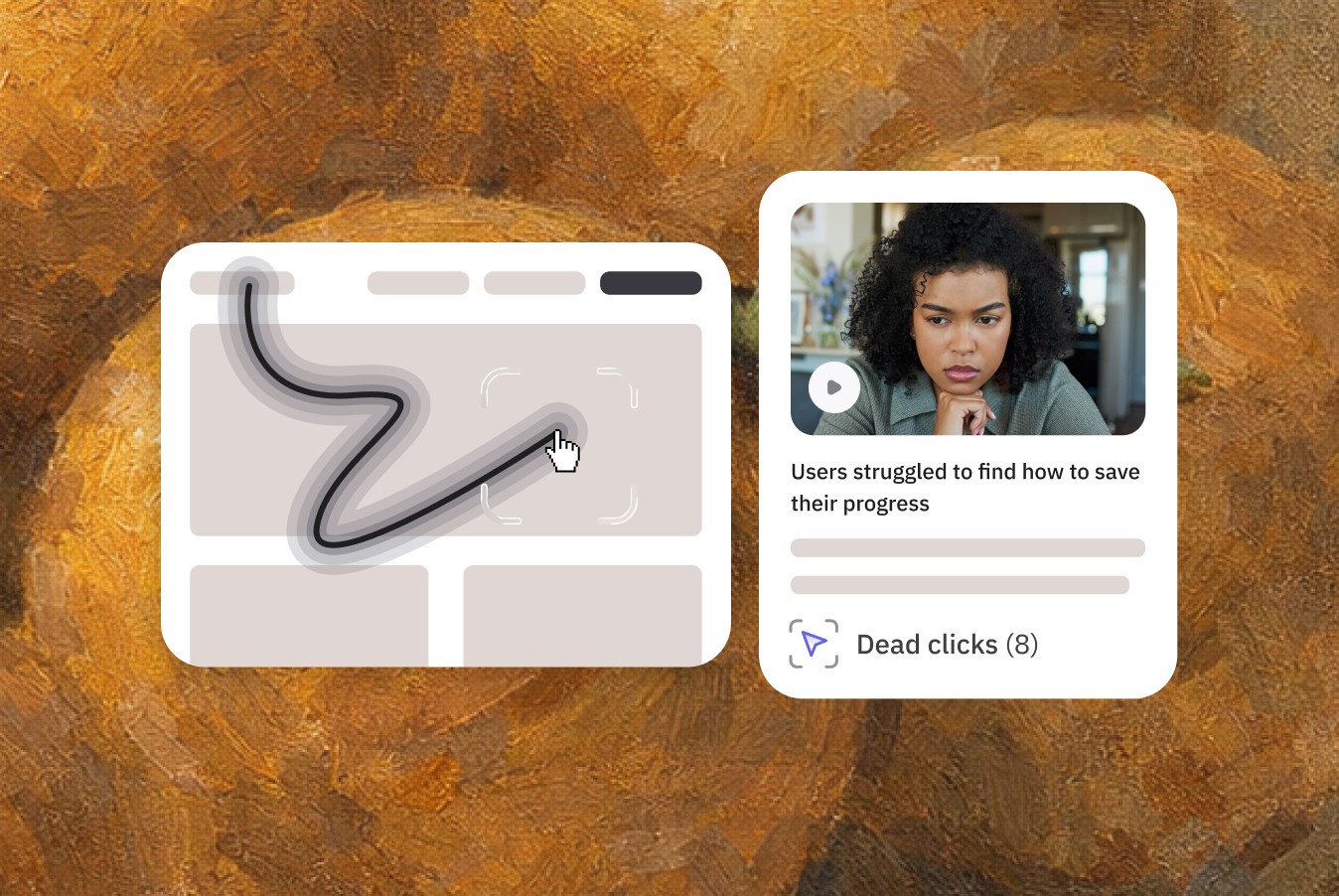

Monitor participant screens during usability studies to capture clicks, hovers, navigation paths, and moments of friction — then probe automatically based on what the AI sees and see themes automatically surfaced in reporting.

Emotional Intelligence

Read facial expressions and tone changes in real time to detect how participants actually feel — not just what they say — and probe dynamically when emotions surface so you can learn the real “why” in reporting.

Physical Intelligence

Understand how participants interact with the real-world. During unboxing studies, shopalongs, and more, Outset's AI analyzes real-world experiences on the spot to guide follow-up questions and deliver the most comprehensive, context-aware insights.

Built to make all studies more context-aware

Outset supports a wide range of study types, from digital usability to real-world behavioral research, supported by the industry-leading Visual Intelligence suite.

The advantage of

Outset's Visual Intelligence platform

Instant Aggregation of Observed Insights

Eliminate the manual work of reviewing screen recordings, tagging friction moments, and cataloging emotional cues. Now, all cues — verbal and non-verbal — are embedded directly into reporting.

Visual Intelligence FAQs

Explore how Outset’s Visual Intelligence suite uses AI to capture deeper behavioral, emotional, and real-world insights, helping teams run more context-aware, scalable AI-moderated research.

What is Visual Intelligence?

Visual Intelligence refers to the ability to observe, interpret, and respond to visual and behavioral signals during research. Within Outset’s Visual Intelligence tools, this includes analyzing screens, facial expressions, and real-world interactions to understand what participants do, not just what they say. This approach enables deeper, more context-aware insights in AI-moderated research.

How does Visual Intelligence software improve research insights?

Visual Intelligence software enhances insight quality by combining behavioral, emotional, and contextual data in real time. It eliminates the need for manual review of recordings and surfaces patterns automatically, helping teams uncover friction points, emotional reactions, and unspoken behaviors faster and more accurately.

What are the benefits of using Visual Intelligence AI in research?

Visual Intelligence AI enables more context-aware interviews by dynamically adapting follow-up questions based on what participants are doing and feeling. This leads to richer qualitative insights, faster time to analysis, and a clearer understanding of the gap between what users say and what they actually do.

How do Visual Intelligence tools differ from traditional research tools?

Traditional research tools rely heavily on self-reported feedback and manual analysis. In contrast, Visual Intelligence tools automatically capture and analyze visual and behavioral signals, making them more scalable and accurate. This shift allows researchers to gather deeper insights without increasing operational complexity.

Can a Visual Intelligence platform support both digital and real-world research?

Yes. A modern Visual Intelligence platform supports digital interactions like usability testing as well as real-world scenarios such as in-home studies and shopalongs. By combining multiple data sources, it provides a more complete view of user behavior across environments.

How does Visual Intelligence enhance AI-moderated research?

AI moderated research becomes significantly more powerful with Visual Intelligence. By integrating Visual Intelligence software and real-time analysis, the AI moderator can adjust questions based on observed behaviors, emotional cues, and environmental context, resulting in more relevant probing and higher-quality insights at scale.